Integrating Vocal Feedback to Optimize Neuromuscular Electrical Stimulation for Vocal Fold Paralysis

Built a physics-based two-mass vocal fold simulation generating 3,000 synthetic patient profiles and trained a CNN-LSTM neural network to decode hidden mechanical parameters from acoustic speech signals, establishing computational viability for a future closed-loop NMES therapy system.

The Problem

Vocal fold paralysis causes breathy, weakened speech by preventing one or both folds from moving properly. Current Neuromuscular Electrical Stimulation therapy offers a promising treatment path, but relies on fixed stimulation parameters that cannot adapt to each patient's unique biomechanical state. The underlying mechanical properties governing vocal fold health — tissue stiffness, viscosity, and inter-layer coupling — are invisible to clinicians without invasive procedures. There is no existing system capable of inferring these parameters non-invasively in real time.

The Solution

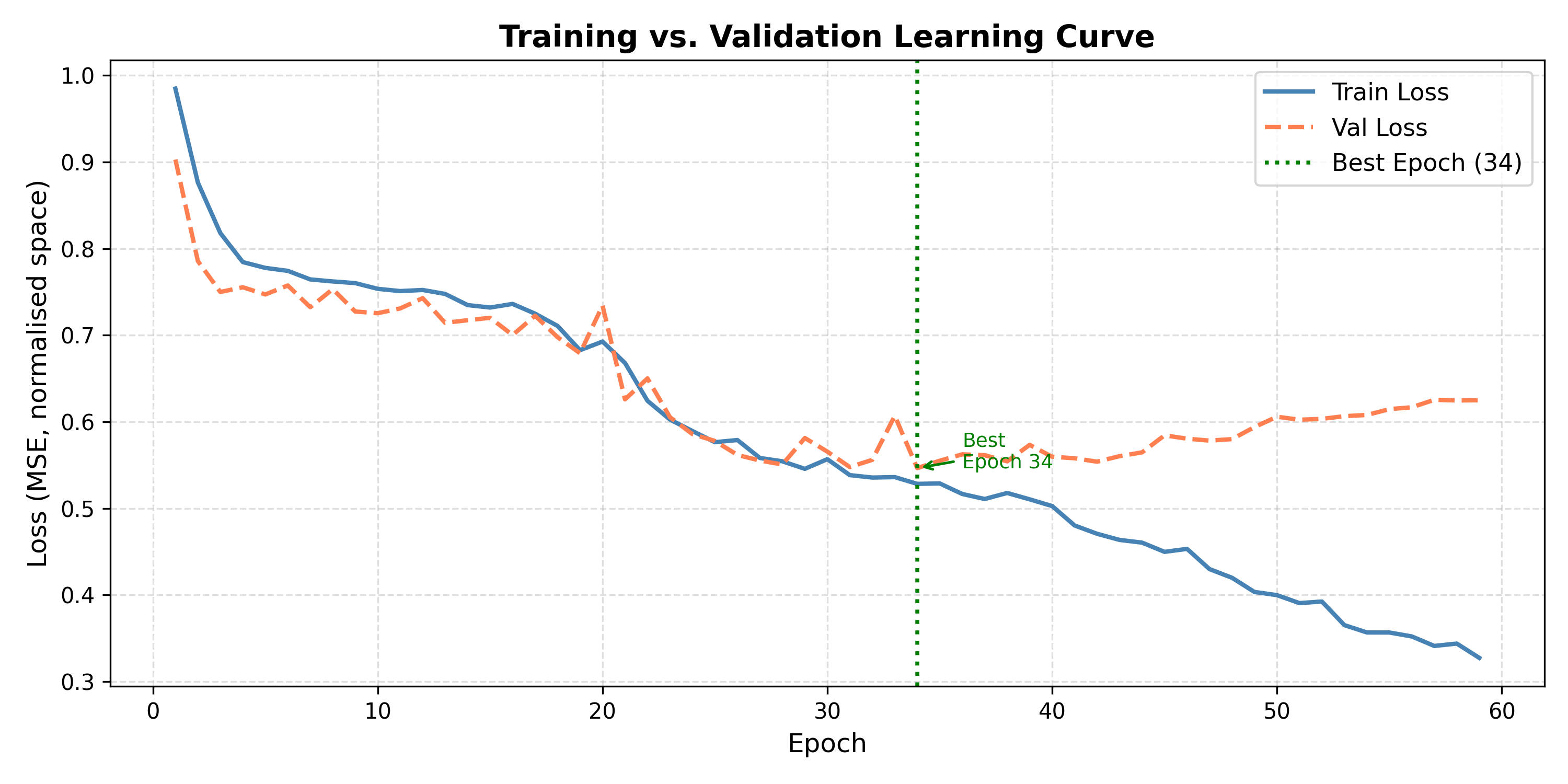

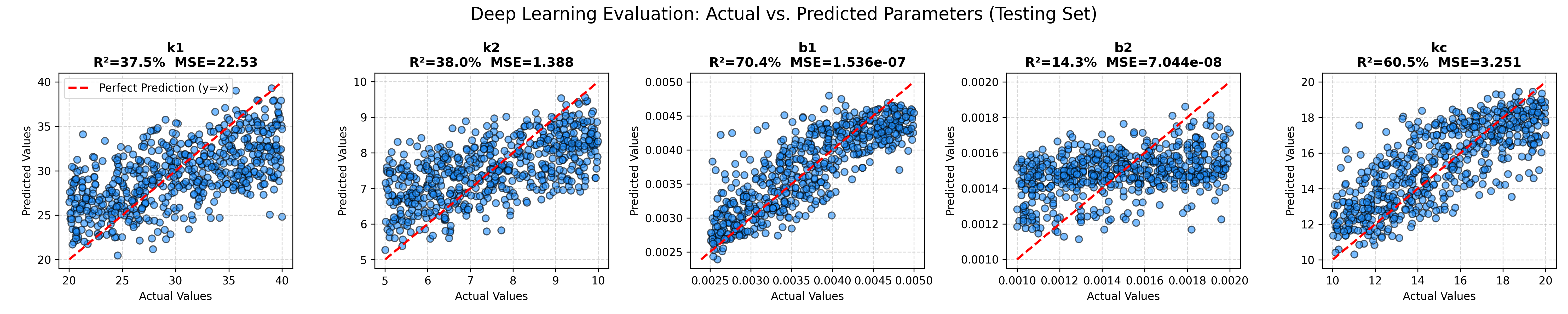

This project builds the foundational sensing layer for a future closed-loop adaptive NMES system. A physics-based simulation models the vocal folds as a two-mass spring-damper system, generating 3,000 synthetic patient profiles spanning healthy to pathological mechanical states. Each profile produces a corresponding acoustic speech signal with biologically realistic noise — tremor, aspiration, and formant drift — causally linked to the underlying mechanics. A hybrid CNN-LSTM neural network then solves the inverse problem, listening to the acoustic output and predicting the five hidden mechanical parameters. Results demonstrate strongest recovery for Thyroarytenoid muscle tone (R²=70.4%) and Suprahyoid coordination (R²=60.5%), establishing that specific muscle group degradation produces acoustically decodable signatures — proving the concept is computationally viable before transitioning to real clinical voice data.

The Outcome

Project Gallery